|

Listen to this article  |

Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory have released an open-source simulation engine that can construct photorealistic environments to train and test autonomous vehicles.

Teaching neural networks to drive vehicles autonomously requires a large amount of data. Much of this data can be difficult to secure in the real world using real vehicles. Researchers can’t simply crash a car to teach a neural network to not crash a car, so they rely on simulated environments for this kind of data. That’s where simulated training environments, like CSAIL’s VISTA 2.0 comes in.

VISTA 2.0, an updated version of the team’s previous model VISTA, is a data-driven simulation environment that was photorealistically rendered from real-world data. It’s able to simulate complex sensor types and interactive scenarios and intersections at scale.

“Today, only companies have software like the type of simulation environments and capabilities of VISTA 2.0, and this software is proprietary. With this release, the research community will have access to a powerful new tool for accelerating the research and development of adaptive robust control for autonomous driving,” MIT Professor and CSAIL Director Daniela Rus, senior author on a paper about the research, said.

VISTA 2.0’s photorealistic environment reflects a recent trend in the autonomous vehicle industry. Developers are moving away from using human-designed simulation environments and towards using ones built from real-world data.

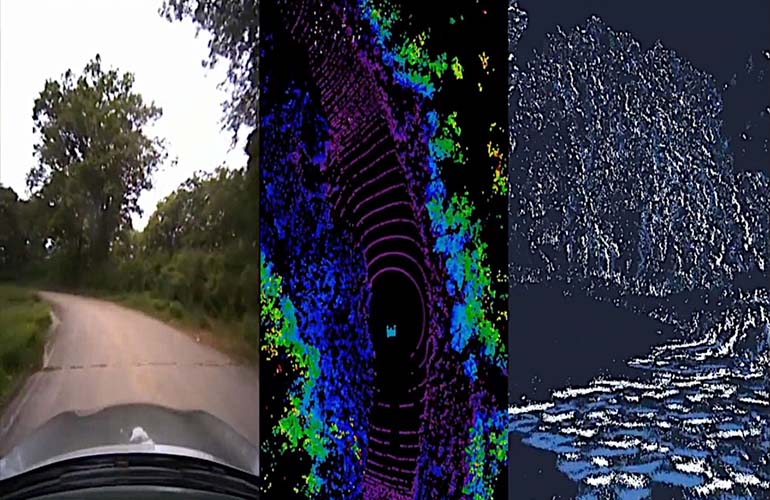

These environments are appealing because they allow for direct transfers to reality. However it can be difficult to synthesize the richness and complexity of all the sensors autonomous vehicles need. For example, to replicate LiDAR in these environments, researchers essentially need to generate new 3D point clouds with millions of points using only a sparse view of the world.

To get around this, the MIT team projected data collected from the car into a 3D space coming from the LiDAR data. They then let a new virtual vehicle drive around locally from where that original vehicle was, and, with the help of neural networks, projected sensory information back into the frame of view of the new virtual vehicle.

VISTA 2.0, can open-source simulation engine from MIT CSAIL, that can simulate environments for training self driving cars. | Source: MIT CSAIL

The team also simulated, in real-time, event-based cameras, which operate at greater speeds than thousands of events per second. With all of these sensors simulated, you’re able to move vehicles around in the simulation, simulate different types of events, and drop in brand new vehicles not part of the original data.

“This is a massive jump in capabilities of data-driven simulation for autonomous vehicles, as well as the increase of scale and ability to handle greater driving complexity,” Alexander Amini, CSAIL PhD student and co-lead author on two new papers, together with fellow PhD student Tsun-Hsuan Wang, said. “VISTA 2.0 demonstrates the ability to simulate sensor data far beyond 2D RGB cameras, but also extremely high dimensional 3D lidars with millions of points, irregularly timed event-based cameras, and even interactive and dynamic scenarios with other vehicles as well.”

MIT’s team took a full-scale car out to test VISTA 2.0 in Devens, Massachusetts. The team saw an immediate transferability of results, with both failures and successes. Moving forward, CSAIL hopes to allow the neural network to understand and respond to gestures from other drivers, like a wave, nod or blinker switch of acknowledgement.

Amini and Wang wrote the paper alongside Zhijian Liu, MIT CSAIL PhD student; Igor Gilitschenski, assistant professor in computer science at the University of Toronto; Wilko Schwarting, AI research scientist and MIT CSAIL PhD ’20; Song Han, associate professor at MIT’s Department of Electrical Engineering and Computer Science; Sertac Karaman, associate professor of aeronautics and astronautics at MIT; and Daniela Rus, MIT professor and CSAIL director.

Credit: Source link

Comments are closed.